|

Research Interests:

Graduate Student, University of Alberta Sara is currently pursuing her MSc in Computing Science at the University of Alberta. She is under the supervision of Dr. Bailey Kacsmar in PUPS: Practical Usable Privacy and Security Lab. |

|

Education

Master of Science in Computing Science [Winter 2026 - Present] |

|

Bachelor of Science in Computer Science [Spring 2021 - Fall 2024] |

Research

|

|

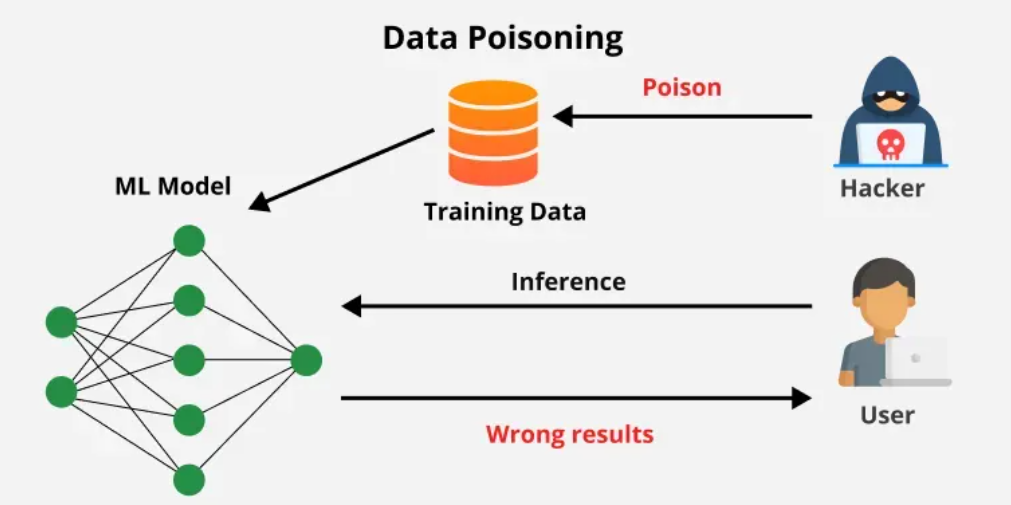

Proposing a New Attack Vector for LLM Poisoning Attacks

2026

Poisoning Attacks, LLMs, Machine Learning, Privacy |

|

Evaluating Theory-Informed Linguistic Features and Large Language Models for Reading Comprehension Question Classification

2026

Learning Analytics, Educational Data Mining, Natural Language Processing |

|

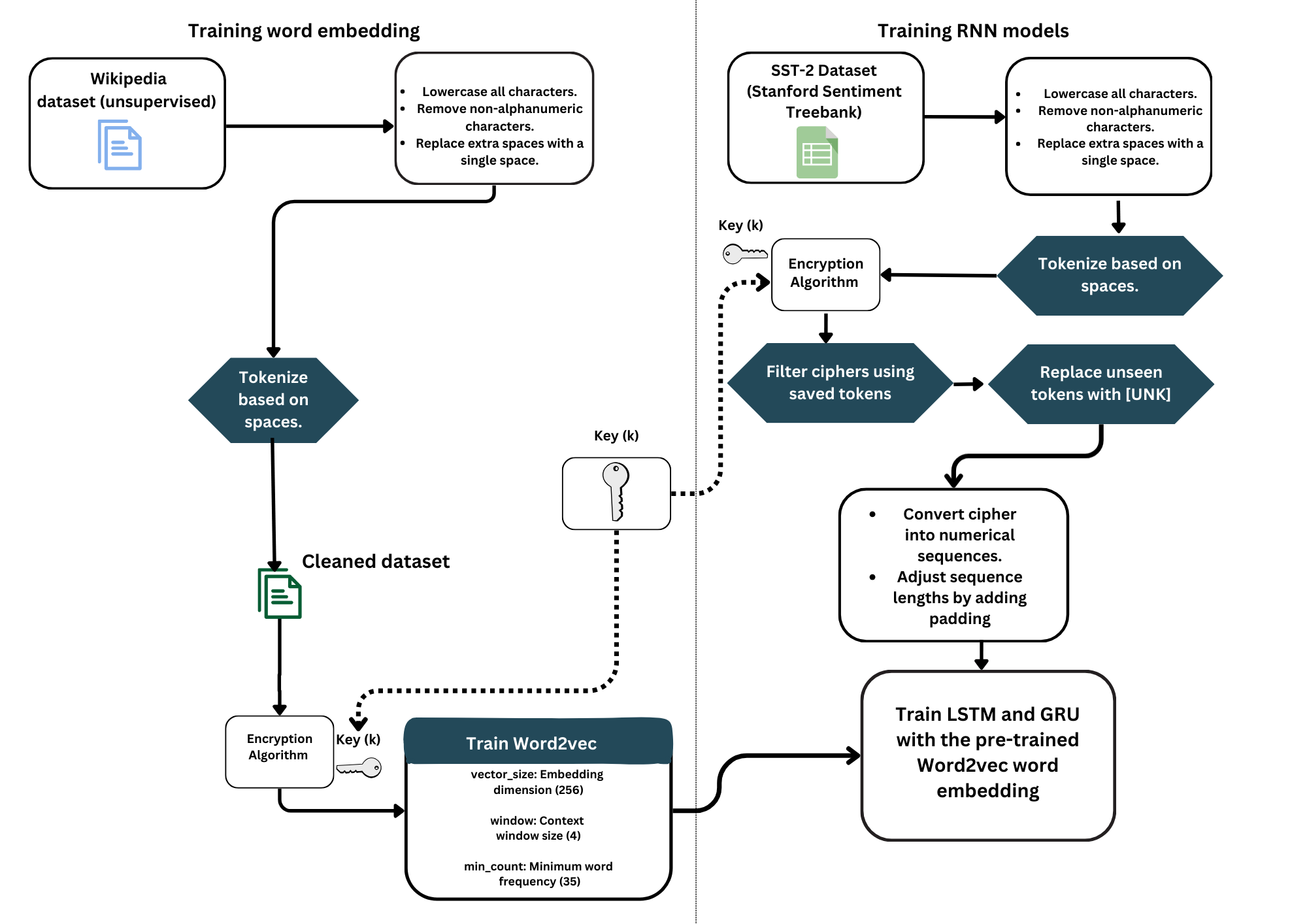

Encrypting Sentiments: A Study on Integrating Encryption Module with NLP Pipeline to Analyze Emotions While Ensuring Security

2024

Natural Language Processing, Privacy, and Cryptography |

|

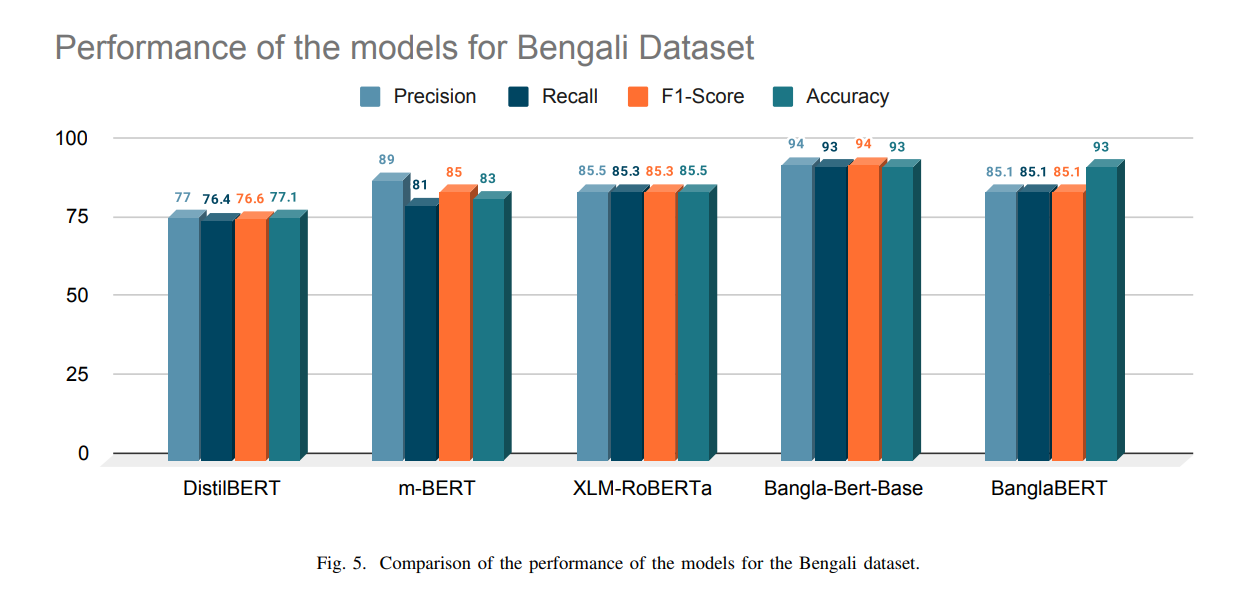

Automated Detection of Online Comments Using Transformers

2023 IEEE International Conference on Communication, Networks and Satellite (COMNETSAT)

Natural Language Processing, Fine-tuning Transformers |

|

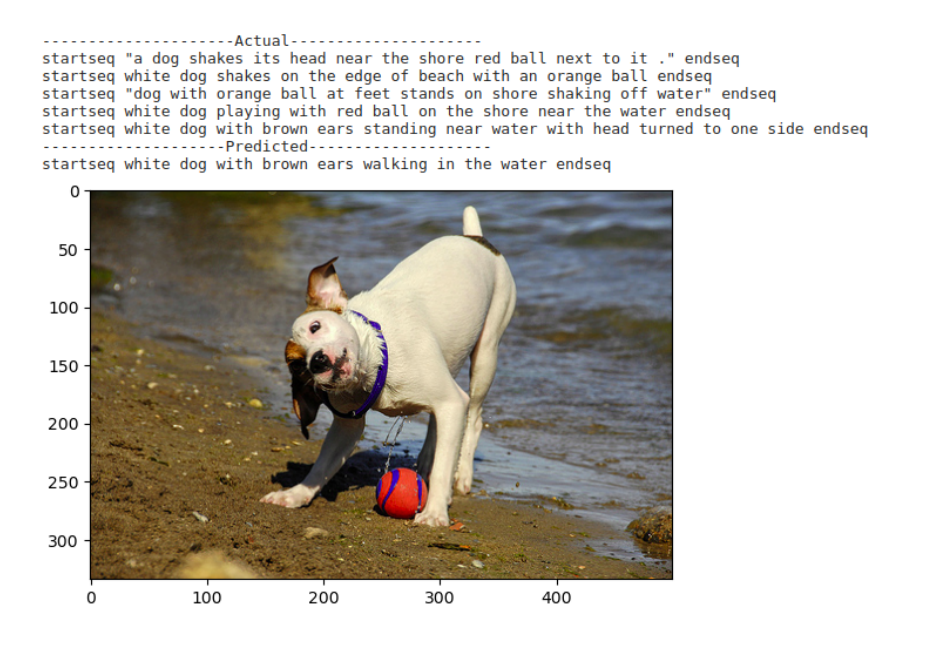

Automated Image Caption Generation using Deep Learning

2023 26th International Conference on Computer and Information Technology (ICCIT)

Natural Language Processing, Image Captioning, Computer Vision |

|

|